VQ-VAE paper extension improving reconstruction error.

Developed a modification on the VQ-VAE model (image generation) that improves by a factor of 2 the reconstruction error on the ImageNet dataset.

VQ-VAE

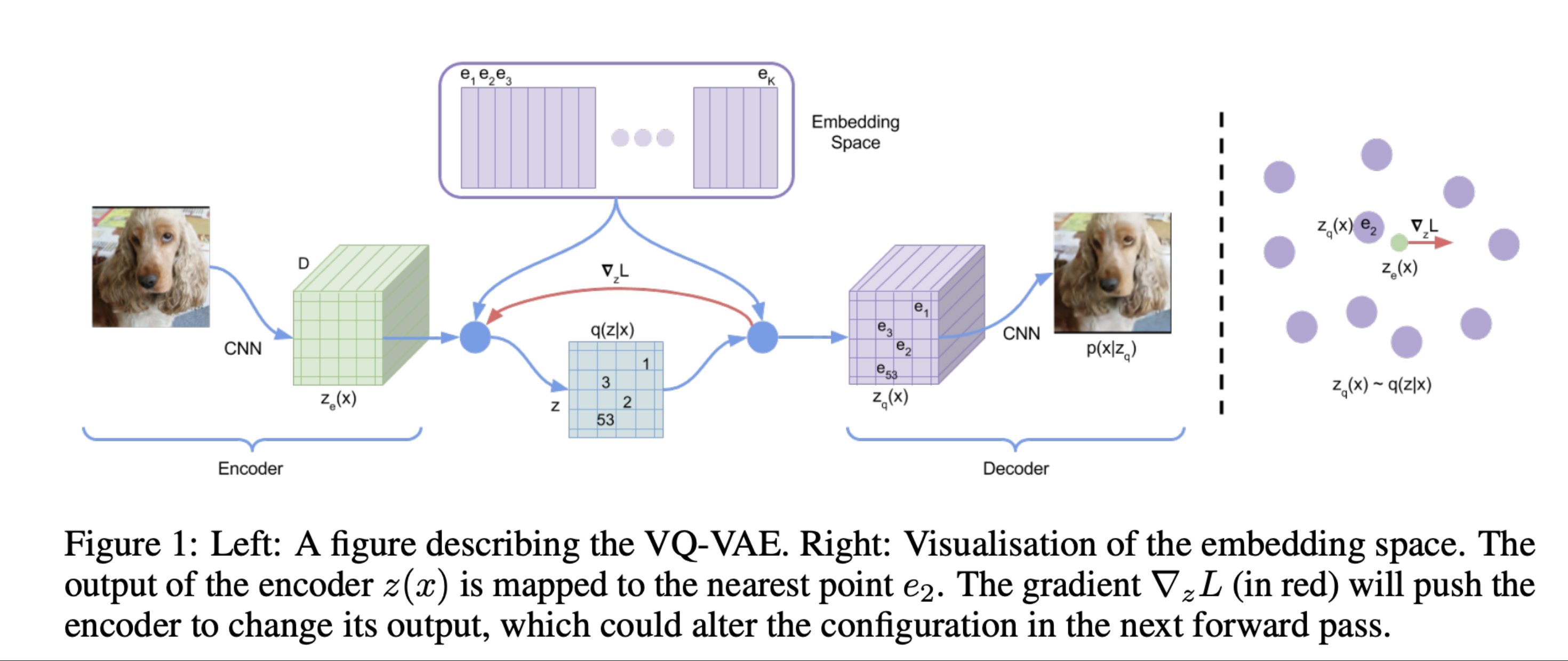

The Vector-Quantised Variational AutoEncoder (Aaron van den Oord, Oriol Vinyals, and Koray Kavukcuoglu. 2017) is a very popular model used for image generation. It is the basic idea behind popular image generation products such as Stable Diffusion or Dall·e.

Motivation

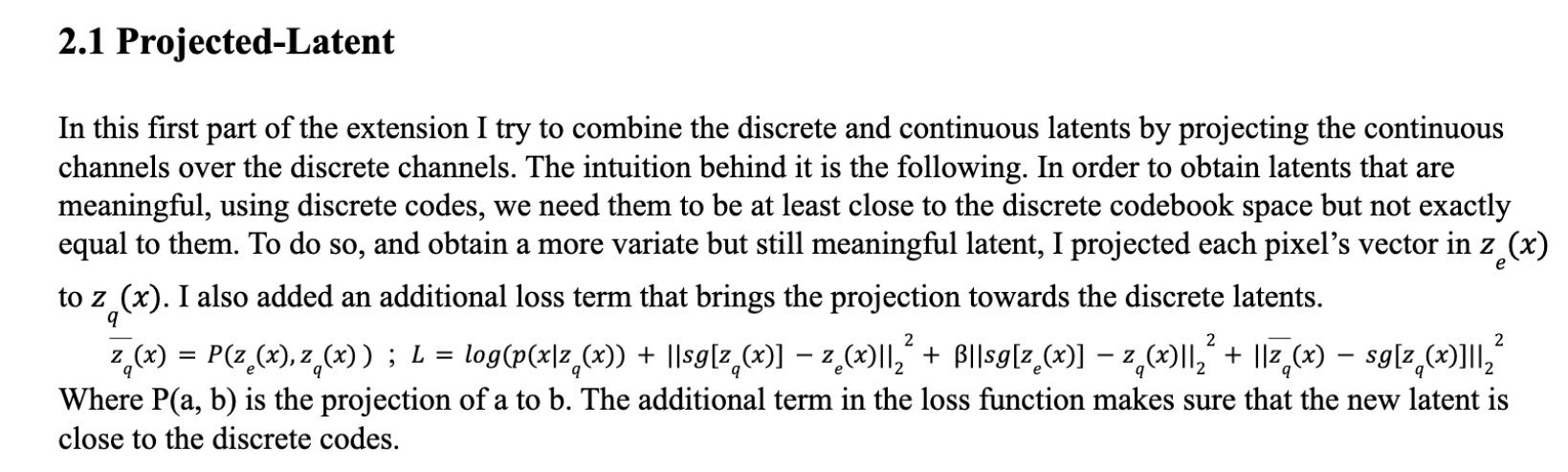

VQ-VAEs have grown in popularity in the last few years given the splendid results they have proven in different generative AI processes. They are mainly based in an encoder-decoder architecture where the latent variables are discretized. Using a discrete space is a more compact way of coding latent variables and it seems reasonable to do so because in real life objects have underlying discrete representations. Nevertheless, discretizing the underlying latent space using a finite codebook as done in VQ-VAE has some limitations when generating new images given that they can only properly represent the underlying structure of the image but misses most of the details. Moreover, sampling from such latent space, will result in a finite set of generated images. To try tackling all these issues, I wondered if there was a possible encoding of the latent space such that it maintains VQ VAEs’ discrete latent properties while combining them with the continuous latents given by the encoder architecture.This, may convert the latent space into a more varied and true to reality one. Nevertheless, I haven’t found any research trying to use both discrete and continuous latent variables to try to make the generating process better. In this report I study how such a combination could be done and if that’s the case, if it results in a better model.

Modifiactions of the original model

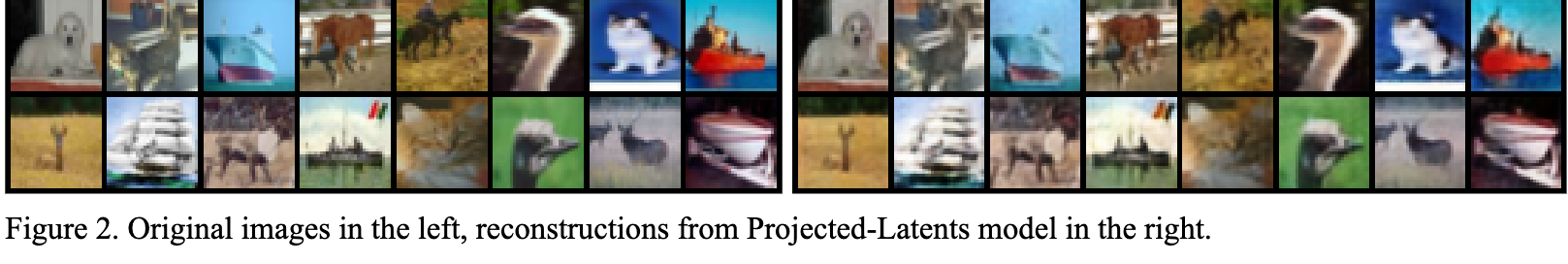

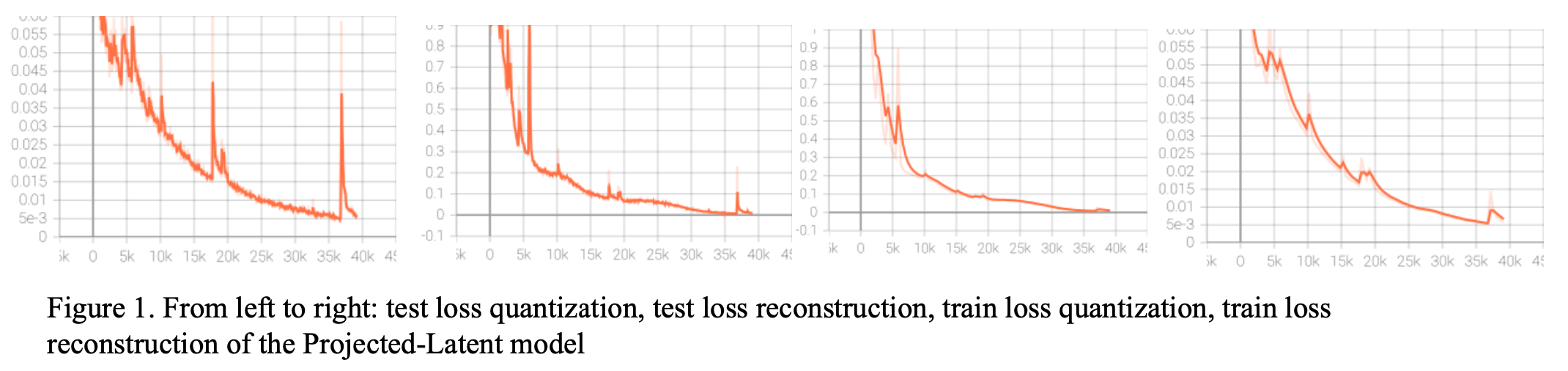

This modification of the algorithm proved to improve the reconstruction error of the original model, leading to very good reconstructions from the original images, being in some cases almost identical.

Results

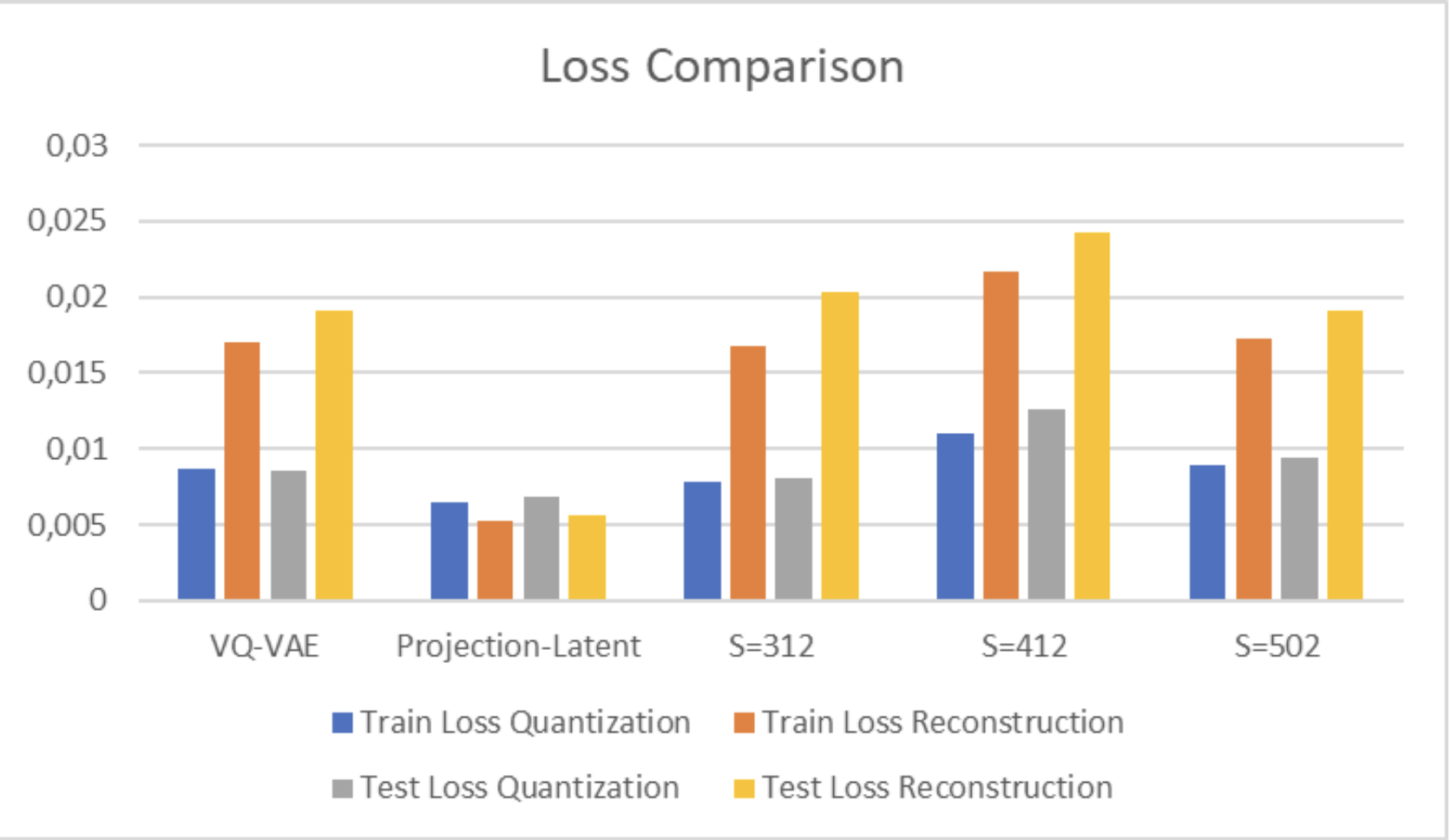

Overrall, the proposed method achieves the best reconstruction errors, as we can see in the following image.

Limitations

While using a discrete latent space makes it very easy to generate new images, by sampling from the discrete latent space, when I use projected latents instead of discrete, the projected space is continuous, which would make it harder to sample or generate new images. To tackle this, one might consider some sort of prior distribution on the latent space, such as a gaussian, and use it to generate new images.

References

Aaron van den Oord, Oriol Vinyals, and Koray Kavukcuoglu. 2017. Neural discrete representation learning. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NIPS’17). Curran Associates Inc., Red Hook, NY, USA, 6309–6318.