Entropy-Regularized Deep Reinforcement Learning with Linear Programming

Study of Entropy Regularization through Linear Programming formulations and its feasibility in large scale settings

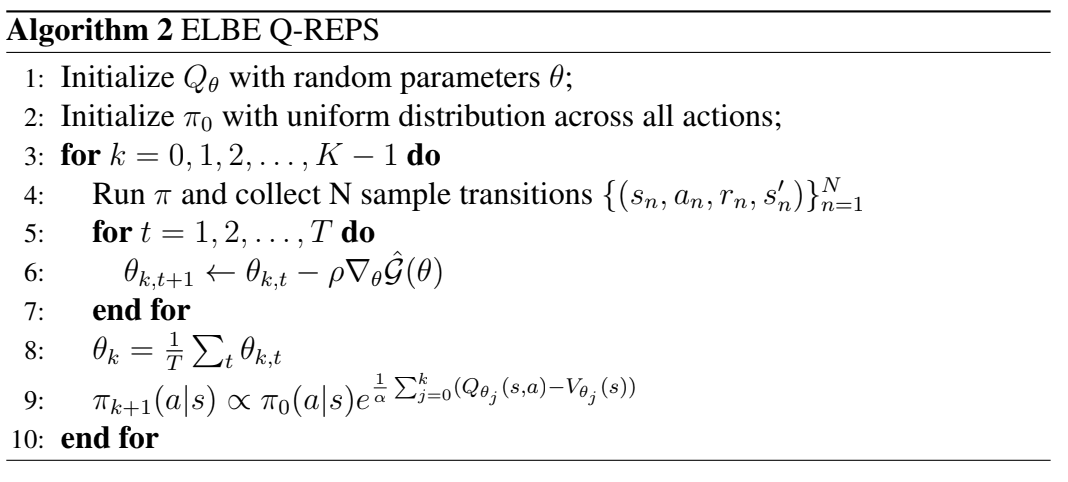

As part of my undergraduate thesis, I am conducting research on several state of the art regularized reinforcement lerning algorithms, as well as tangent variations of such algorithms. This thesis is mainly about an alternative approach to traditional Deep RL, rooted on the Linear Program reformulation for Markov Decision Processes (MDPs). I implemented Q-REPS from the “Logistic q-learning” paper using neural networks and demonstrated that one can also use this framework to create new Deep RL algorithms that compete with well-known Deep RL algorithms like DQN, SAC or PPO, without needing tricks like gradient clipping, target networks or double q networks. I also propose a novel algorithm “Primal-dual approximate policy iteration” rooted in this linear program reformulation and prove that it can be a strong alternative in large scale settings too. For a detailed explanation of everything, I suggest reading the pdf attached below.

Q-REPS

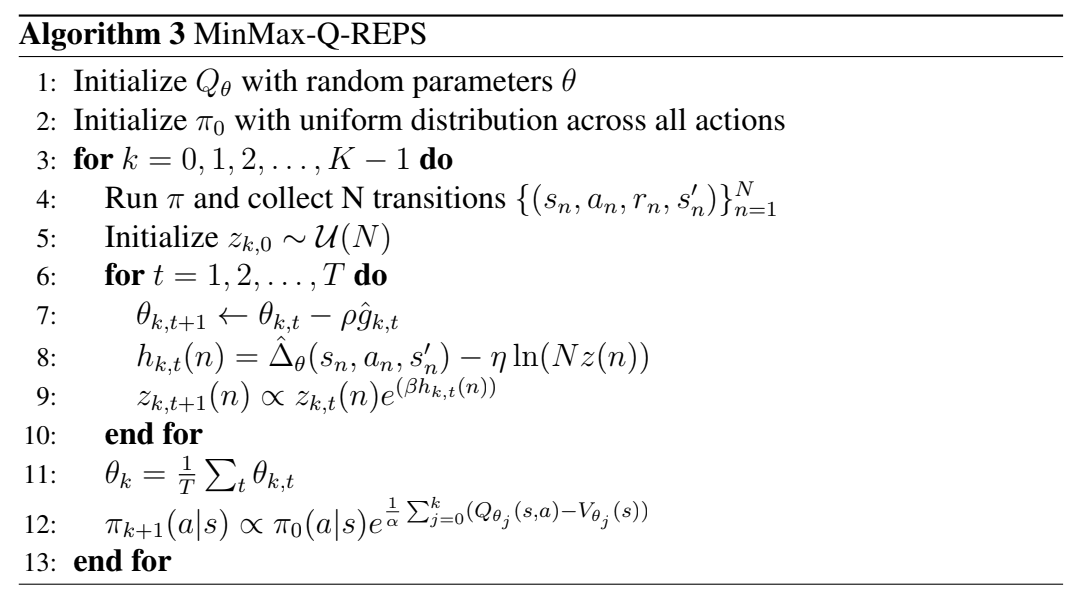

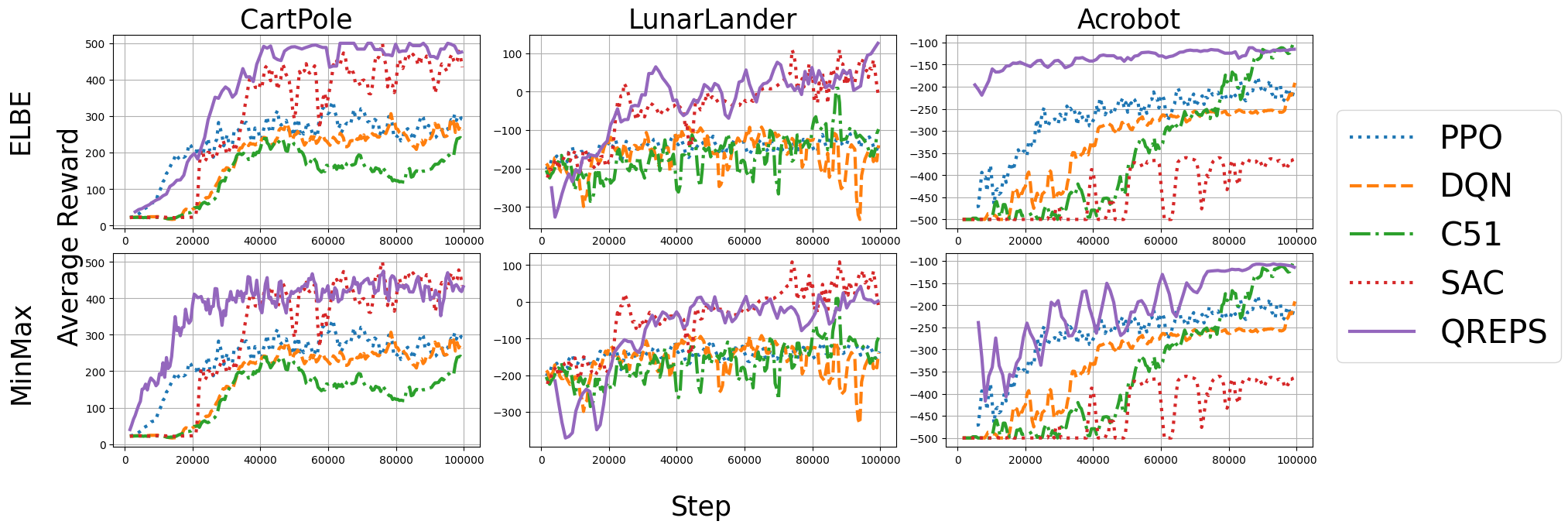

Algorithms

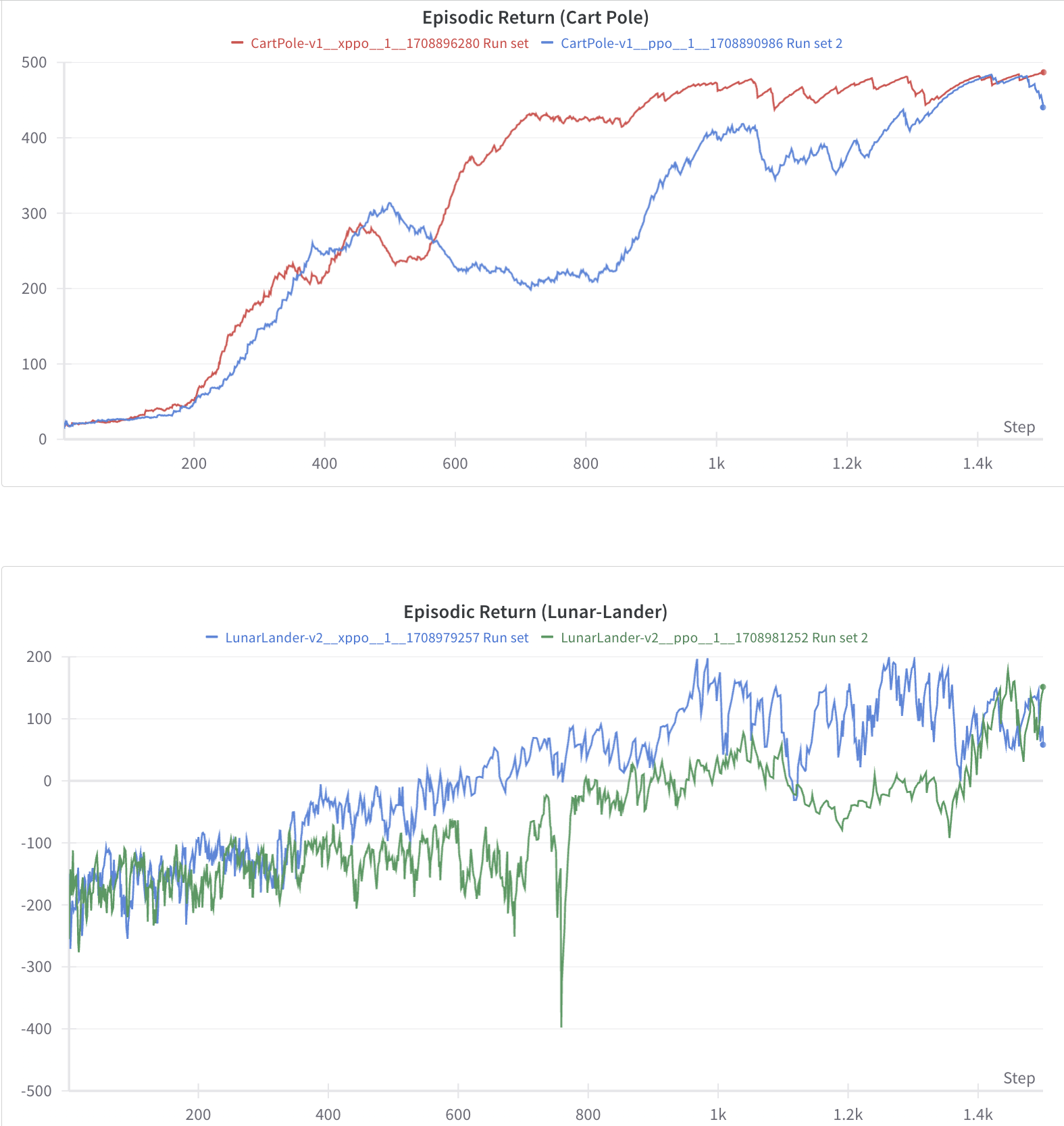

Reward Curves

Q-REPS Gameplay videos

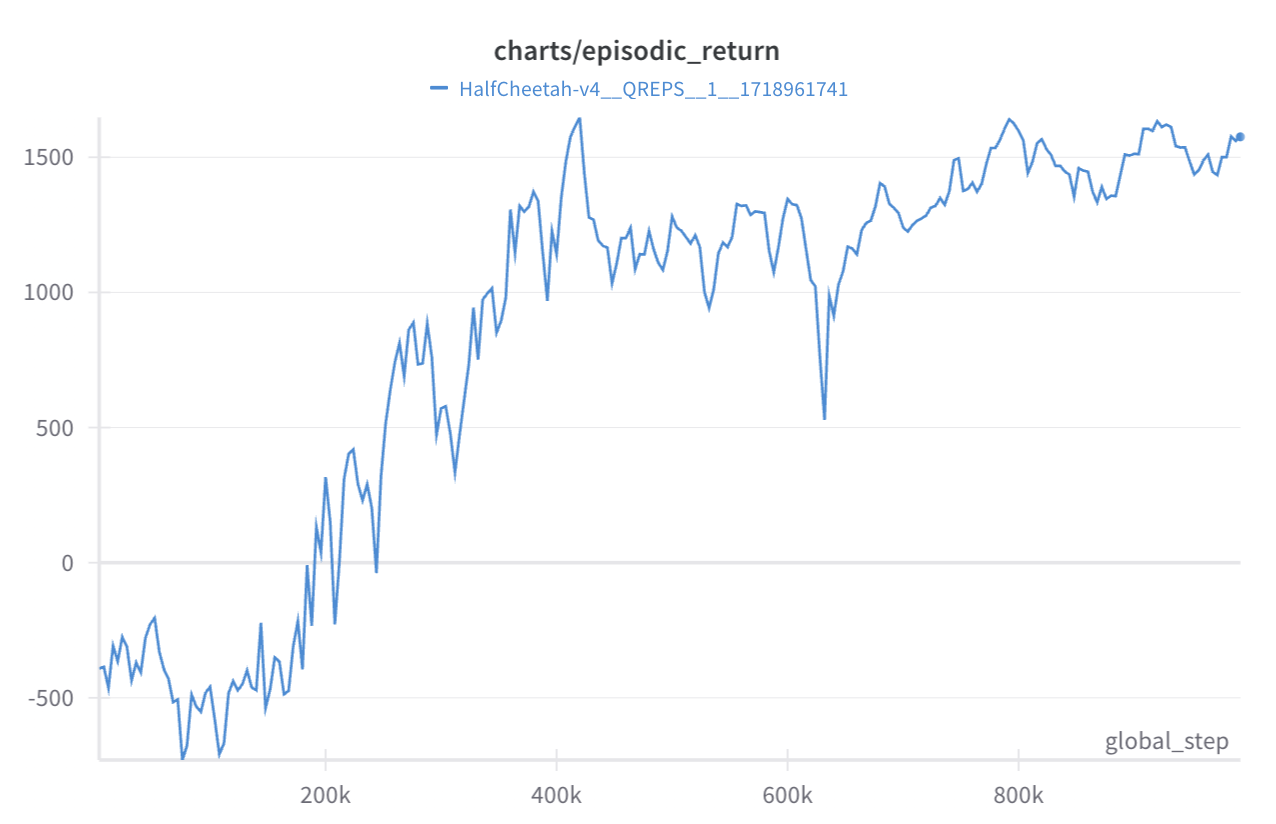

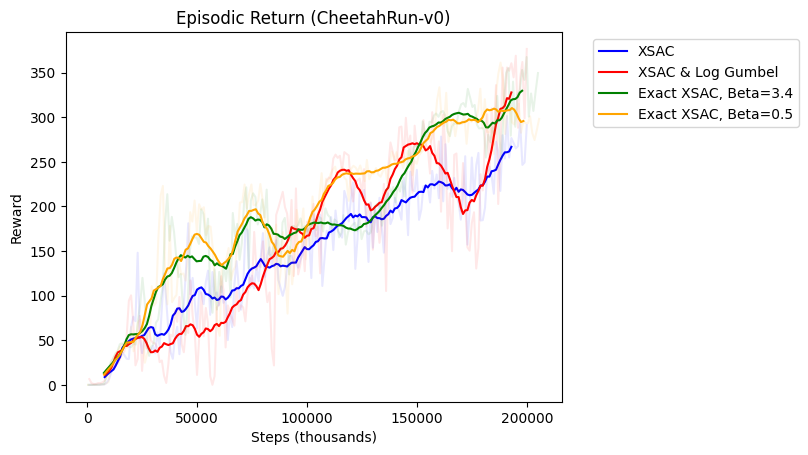

Q-REPS Extensions to continuous environments

With little modification on the original algorithm one could make this algorithm work for continuous actions as well. This is an example on the Halfcheetah environent.

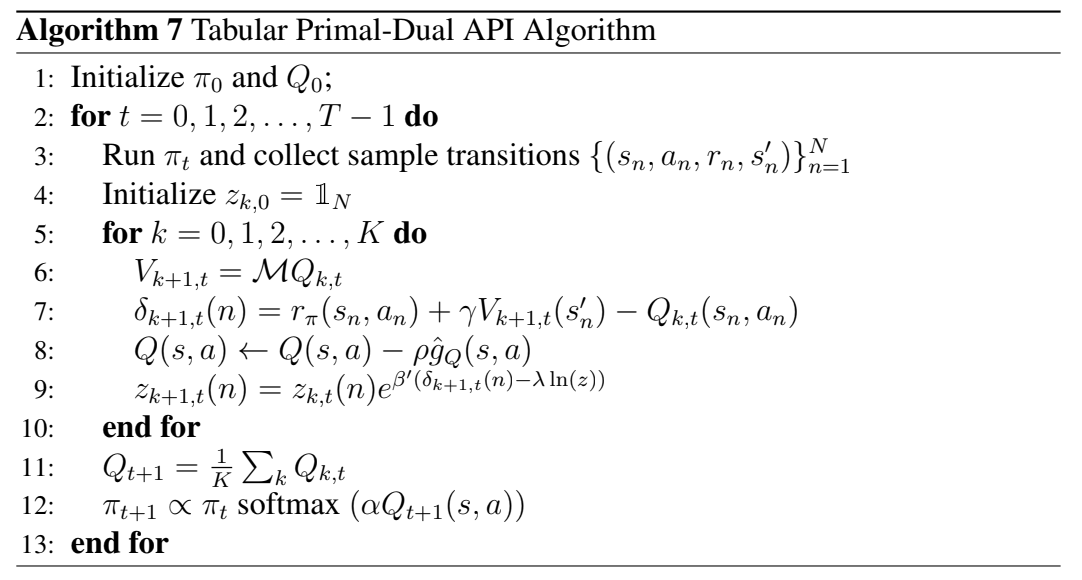

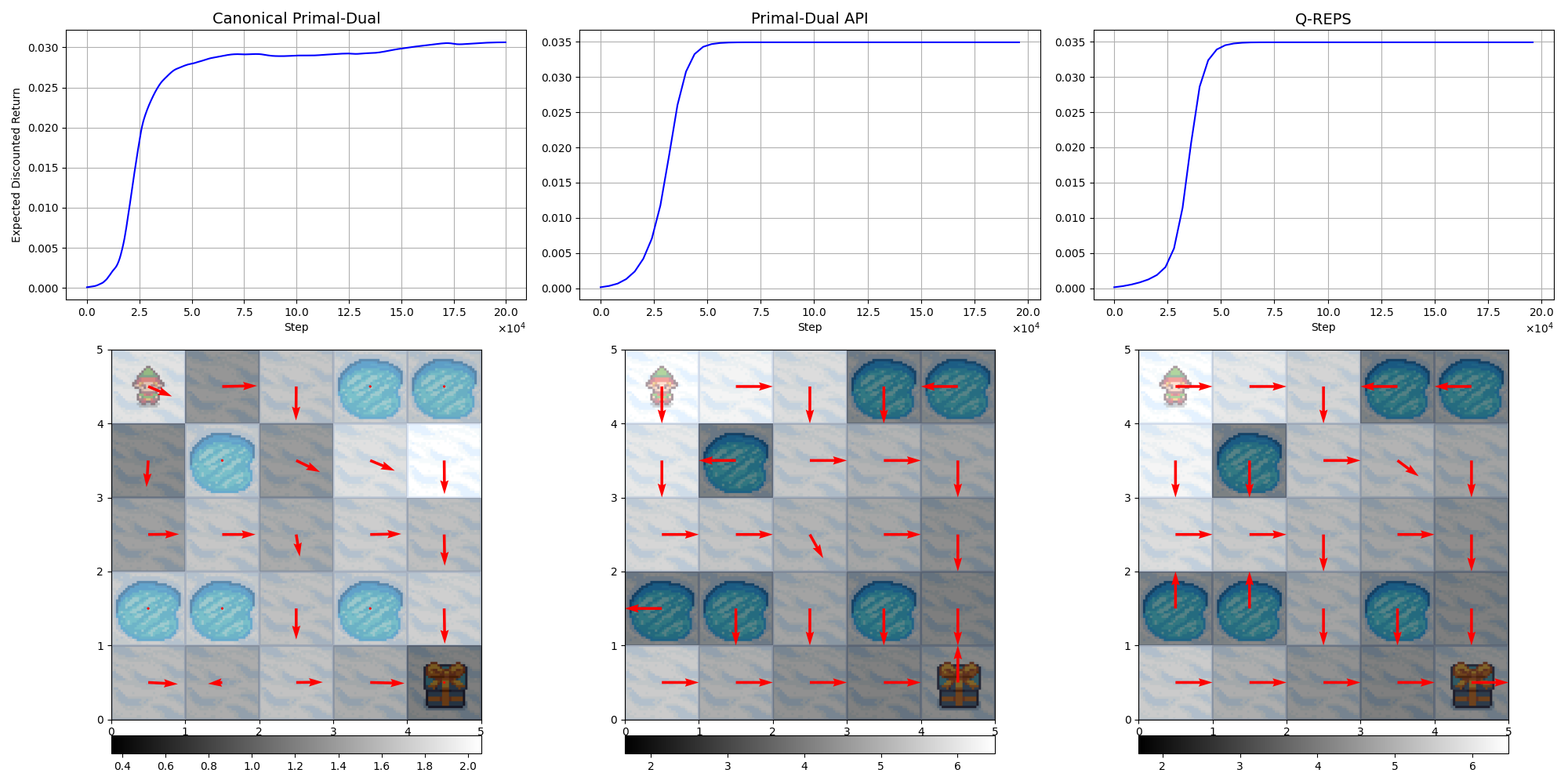

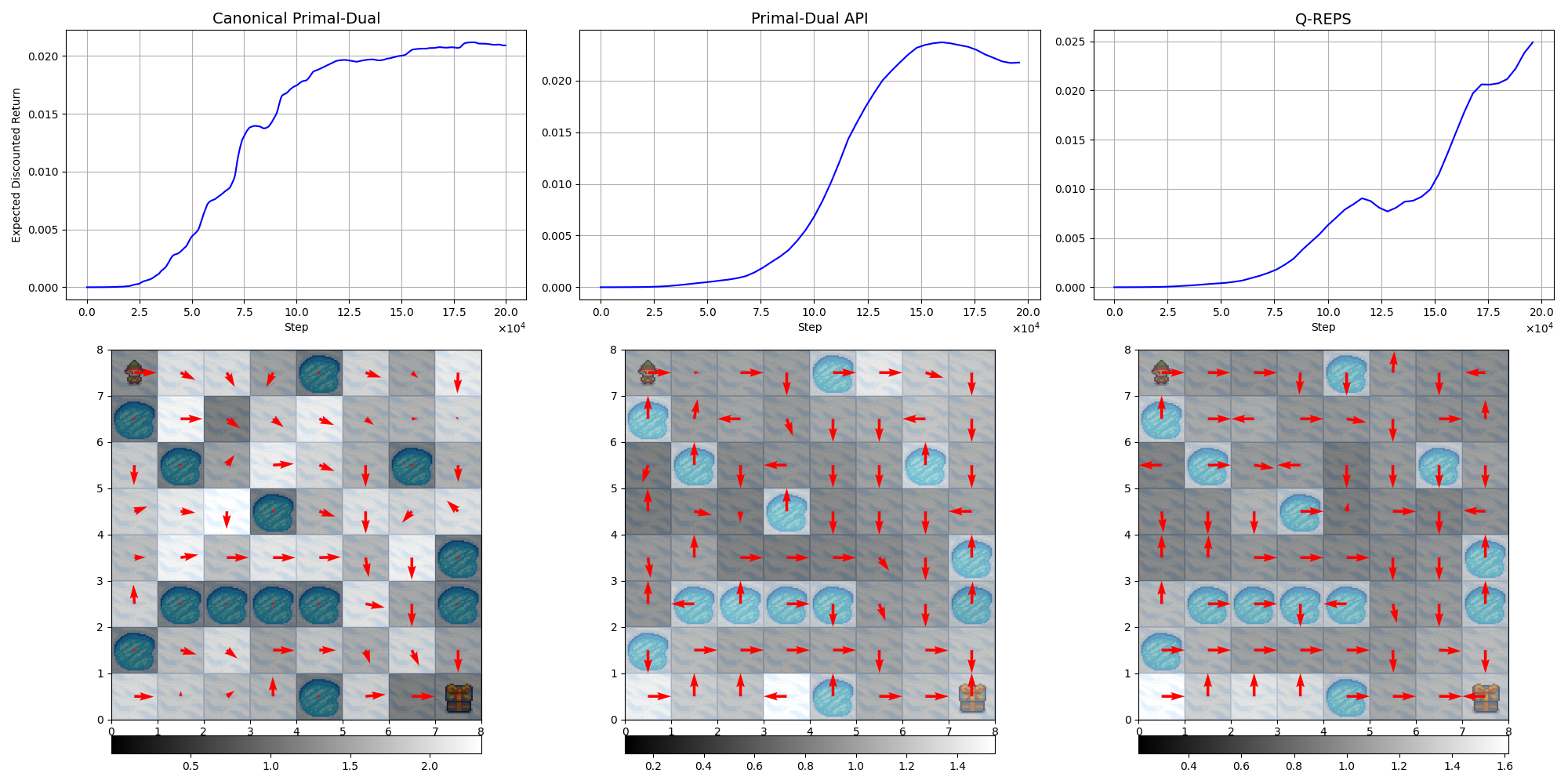

Primal-dual Approximate Policy Iteration

Algorithms

Tabular Results

Thesis pdf

Thesis presentation

Other work outside of the main thesis

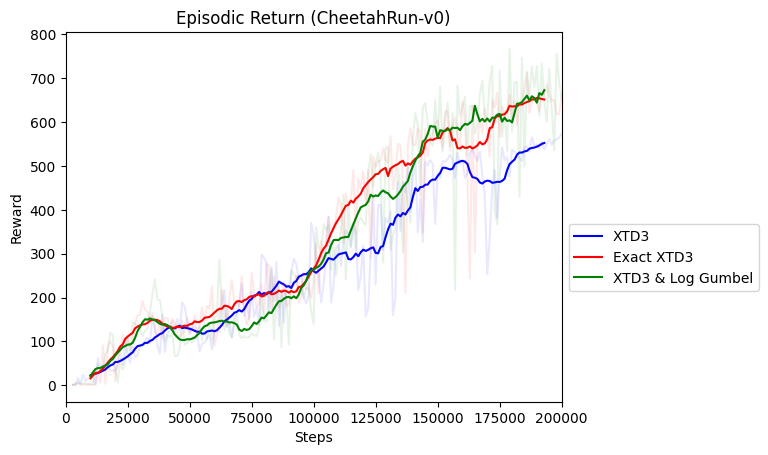

X-QL modifications

PPO modifications using Gumbel loss to learn the value function